James Chang / Work / The Judge Tool / Under the hood

Judge Tool · Tech / Under the hood · Last Updated

A full-stack KCBS judging platform, shipped in 20 days.

16,424 lines of code, 113 passing tests, 12 Prisma models, 62 auth-guarded server actions. Built methodically across 13 sessions with a transparent AI-assisted workflow.

By the numbers

AI development workflow

How it actually works

- Terminal-only development. Every line goes through Claude Code (CLI). No IDE inline suggestions.

CLAUDE.mdas context bridge. A 103-line file gives each new session the full project context — stack constraints, business rules, auth patterns, seed data. Eliminates "starting from zero every conversation."- Session = 1 conversation = 1 PR. Each session produces exactly one PR, ranging from focused fixes (PR #3: 15 files) to large feature ships (PR #1: 130 files).

- Human directs, AI implements. Humans made the architecture decisions (feature modules, security model, UX flows). The AI generated implementation, tests, documentation. Code review happened before merge, not after.

Architecture

Tech stack

Frontend

Backend

Testing

Infrastructure

Feature modules

5 domain modules following a consistent pattern: actions/, components/, types/, schemas/, store/, index.ts barrel.

competition

judging

scoring

tabulation

users

Lessons learned

What worked

CLAUDE.md is critical. 103 lines of cross-session context eliminates re-briefing every session.

Pure functions for business logic. KCBS scoring, box distribution, validation all extracted and tested (113 passing tests).

Feature module pattern. 5 modules with identical structure keeps the code navigable as it scales.

E2E simulation catches what unit tests miss. Found 3 scoring bugs that passed code review.

What was hard

Security is easier to build in than bolt on. Auth guards should be in commit 1, not commit 3.

AI generates code faster than you can review. Added automated verification (tests, simulation) instead of rushing reviews.

Don't build the whole app in one commit. PR #1 (130 files) made debugging nearly impossible. Incremental PRs are better.

Sketch navigation before coding. Avoided dead routes and rework.

Live screenshots

The public marketing site at thejudgetool.com.

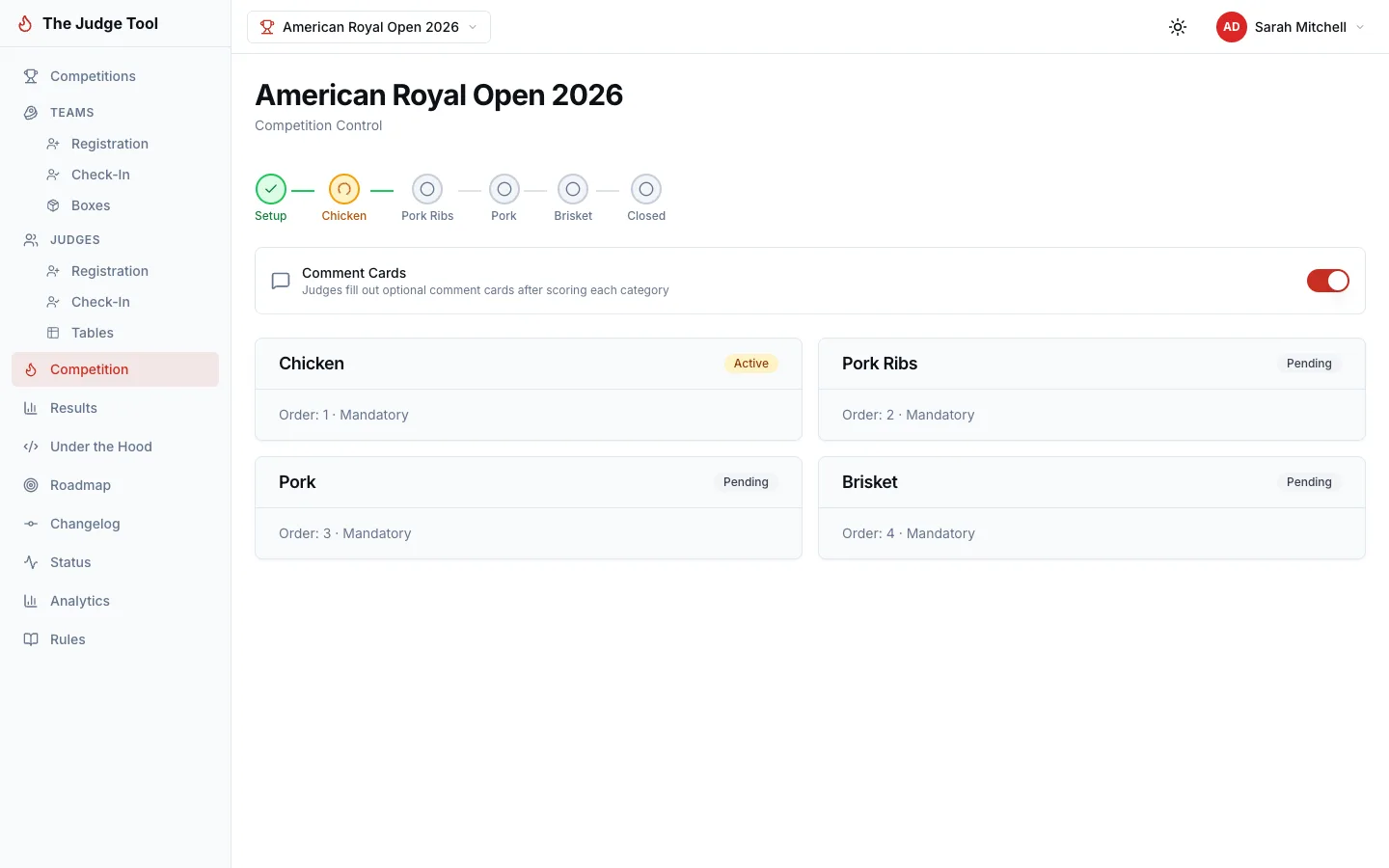

Organizer dashboard — category advancement and live competition control.

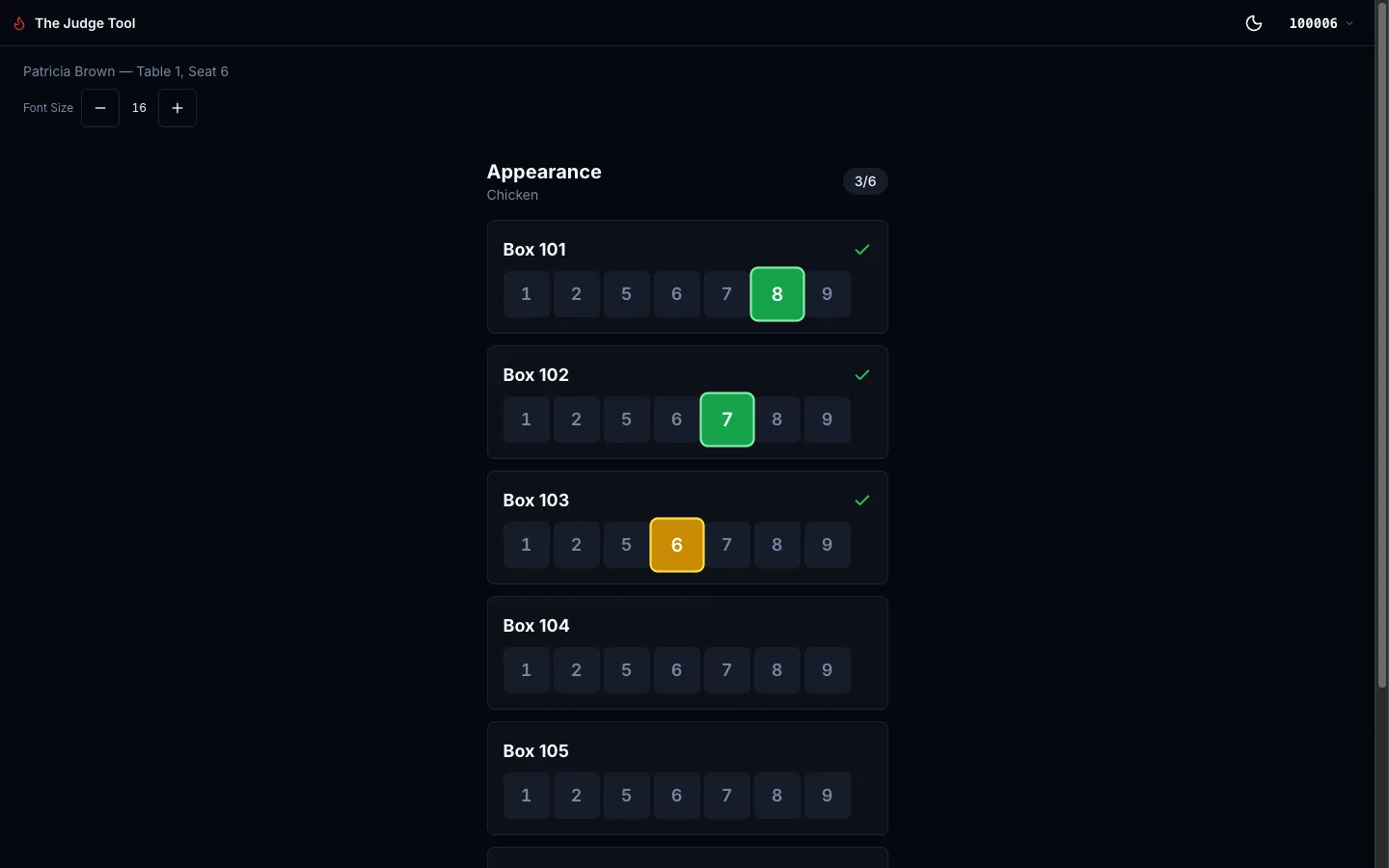

Judge scoring interface — KCBS-compliant score entry (1, 2, 5–9).

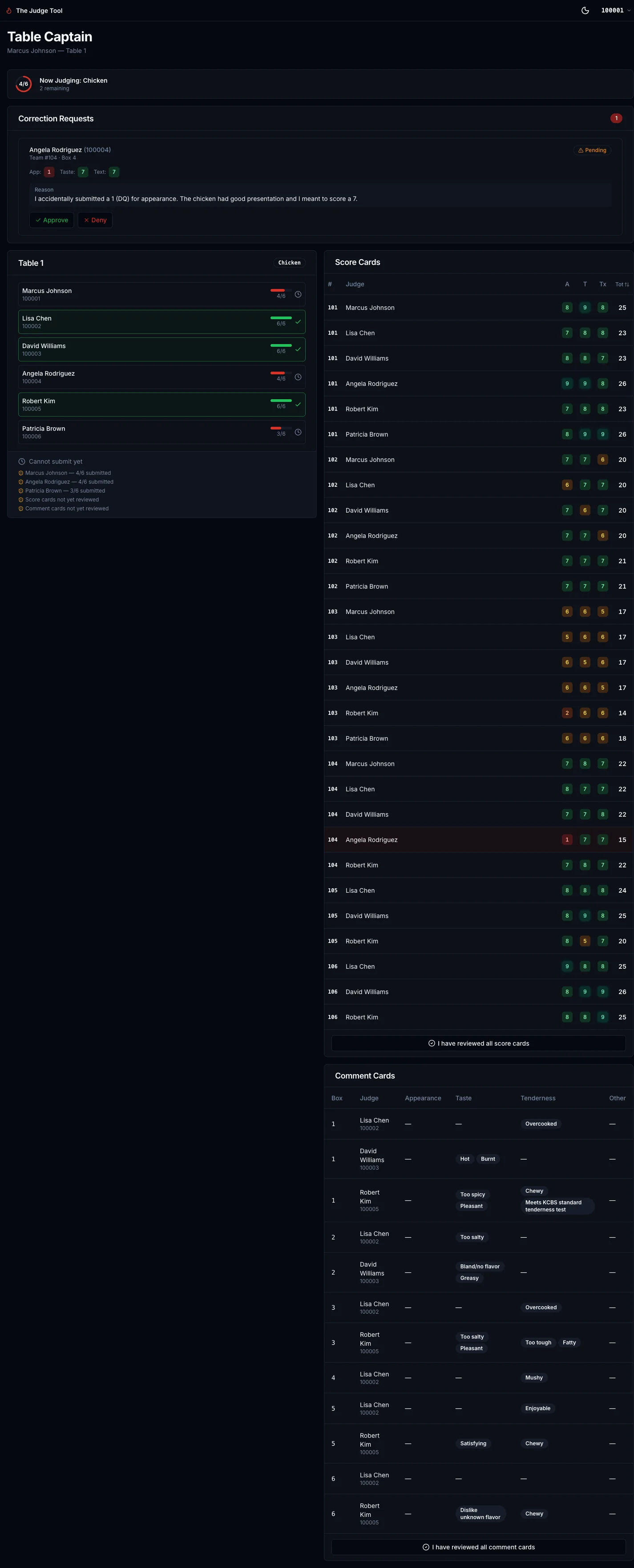

Table captain view — score review and correction-request approvals.